OK, so I ran my Obviousity Index test on several of the classes I’ve taken (not enough for a comprehensive scientific analysis, but enough to inform this conversation).

Remember from yesterday that the Obviousity Index is derived from looking at MOOC courses where the final grade is based entirely on how a student performs in automatically scored quizzes (no papers or other rubric-scored exercises, either self- or peer-graded).

And for each test that contributed to the overall grade for the course, I determined what score I would have gotten on each test if I did nothing more than:

- Answered each multiple choice question by choosing the longest answer; or

- In the case of multiple choice questions that had “All of the above” or “None of the above” as options, choosing “All” (if it was available) or “None” (if that was the only “…of the above” type choice).

- Whenever I had a question with just two answers (True/False, Yes/No, Increase/Decrease or something similar), choosing my answer through a coin flip

Because some of the courses used in this analysis are still live (or may be repeated), I’ve chosen not to specify which MOOCs I’m talking about (although a long-term reader or enterprising cheater could probably figure that out).

OK, so starting out, here are the results from a humanities course where the 5% of the grade was based on how you did on each of five 5-question quizzes and 75% of your grade came out of your performance on a 25-question final exam. (The pass/fail cut-off score for the entire class was 60%.)

As you can see from the data, blindly following the rules noted above (without paying the slightest attention to the material in the test or the course) earned me a score of 40%.

Now 40% would earn me a Fail (which is good). But keep in mind that since these tests were mostly made up of multiple choice questions with five answers, blind guessing should have given me a score closer to 20% (which means these test questions suffered from the longest answer being the correct one a few times too often). And while 40% is still below the cutoff, notice that I was able to get two thirds the way to that passing score by mindlessly applying a few rules that took advantage of mistakes test creators often make when writing questions.

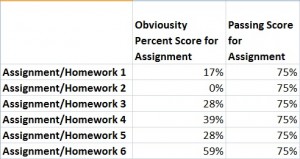

Moving on, here are the results from a second humanities course where the final grade was based on passing each of six homework assignments with a score of at least 75%:

In this case, the homework quizzes had very few multiple choice questions featuring long answers (most MC items just used numbers or names that didn’t give the game away). Tests for this course also had a fair share of multiple response and open response (fill-in-the-blank) questions which I automatically assigned a Obviousity rating of 0.

But these tests also included a large portion of items with only two answers (true-false and variants) which I answered based on a coin toss. Which is why quizzes with high proportions of two-answer items (like #4 and #6) had total Obviousity scores close to (one even above) the 50% you’d expect to earn by randomly guessing the answer in a test made up entirely of true-false items.

Given the nature of the test items, a cut off score of 75% (vs. a threshold closer to 50%) was warranted. But these tests could have also been made more challenging (or at least less susceptible to Obviousity problems) if they made lighter use of true-false and similar two-answer question styles.

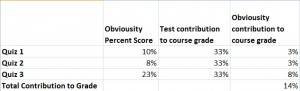

Finally, here are the results from a statistics class where the grade was based on three assessments given at different points during the course, each of which consisted of 25-31 questions:

Here you’ll notice that, except for the third quiz, Obviousity issues were not prevalent largely because, due to the nature of the material, a majority of items were open ended (requiring you to perform statistical calculations in order to get the right answer).

So what does this (admittedly preliminary) analysis teach us?

Tune in next time to find out.

Leave a Reply